Quick Answer: Colocation and on-premise infrastructure are both models for housing your own physical servers, but they differ significantly in who owns and manages the facility around them.

On-premise means your organisation bears every cost, power, cooling, physical security, compliance, and staffing, within a space you control. Colocation means you keep ownership of your hardware while housing it in a professionally managed, purpose-built data centre that handles the facility infrastructure.

For most Canadian enterprises, the financials favour colocation once the full picture is on the table, but the right answer depends on workload type, compliance requirements, and how your organisation accounts for total cost of ownership.

Key Takeaways

- On-premise infrastructure carries significant hidden costs that most IT budgets fail to fully account for, from power and cooling to compliance certifications and after-hours staffing.

- Colocation is not managed hosting. You own and operate your own hardware; you are simply placing it in a better facility.

- Modern GPU and AI workloads often pull 20–40kW per rack, which most commercial office environments simply cannot support.

- Canadian data sovereignty adds a layer to this decision that you can’t ignore. Where data physically sits is only one piece of a multi-part compliance question.

- Qu Data Centres operates nine facilities across five Canadian markets with capacity available today, giving enterprises a sovereign, enterprise-grade colocation option without a multi-year wait. Book a tour today.

When an organisation builds or expands its server infrastructure, the decision about where those servers live tends to be made too quickly. A rack in the server room feels like the obvious choice. It is physically close, it feels controllable, and the sunk cost of existing hardware makes change seem expensive.

The problem is that most of those instincts don't hold up when you do the math. Power bills compound annually. Cooling systems age and need replacement. Compliance audits start asking harder questions about physical security documentation and fire suppression specifications. On-call coverage gets absorbed by IT staff who should be working on something else.

At the same time, colocation keeps getting more and more popular. It is no longer just a place to put hardware when you run out of room.

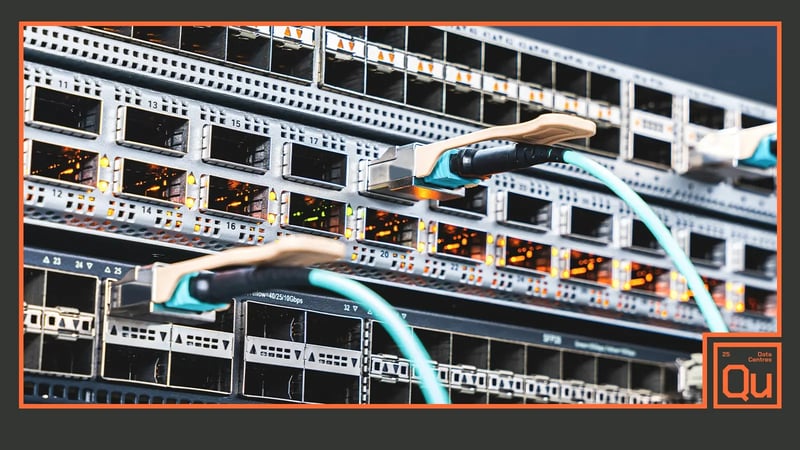

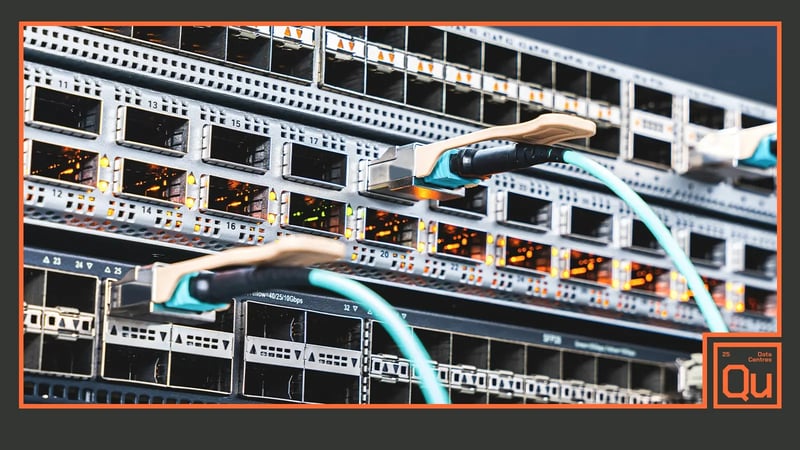

Modern colocation facilities are engineered for the power densities that AI and high-performance compute workloads actually require, and the connectivity ecosystems inside those facilities have become infrastructure assets in their own right.

Here’s what you need to know.

What Is Colocation Vs On-Premise Infrastructure?

Before comparing costs and use cases, it helps to be precise about what these two models actually mean. They are often conflated with managed hosting or cloud services, and that confusion leads to the wrong questions being asked.

How On-Premise Server Infrastructure Works

On-premise infrastructure means your organisation owns the servers, owns or leases the physical space they occupy, and bears full operational responsibility for everything around them.

That includes the power delivery systems, the uninterruptible power supplies, the cooling equipment, physical access controls, fire suppression systems, network cabling, and the human beings who maintain all of it. When something fails at 2 AM, a cooling unit, a UPS bank, a power distribution unit, it is your problem to resolve, on your timeline, with your resources.

This model gives organisations maximum direct control. It also means maximum direct exposure to every cost and risk that comes with operating a data centre environment, regardless of the scale.

What Colocation Means For Your Server Architecture

Colocation is frequently misunderstood as a form of outsourced IT. It is not.

In a colocation arrangement, your organisation still owns the physical servers, networking gear, and storage equipment. What you are renting is space inside a professionally managed facility, along with access to that facility's power infrastructure, cooling systems, physical security, fire suppression, and carrier connectivity. The data centre operator keeps the lights on, the temperature stable, and the generators fuelled. Your team manages the hardware that sits inside it.

The difference here matters because it affects how you evaluate the trade-off. Colocation is not giving up control of your environment. It is offloading the facility management that sits around your environment to specialists who do it at scale.

Practical Considerations When Running On-Premise Infrastructure

On-premise infrastructure is not inherently the wrong choice, and for certain organisations it represents a legitimate, well-reasoned decision.

The problem is not the model itself; it’s the responsibility. There are real advantages to running your own environment, but those advantages come with a set of ongoing costs that tend to get underestimated at budget time and felt acutely over the following few years.

Direct Control Over Your Physical Environment

The most defensible argument for on-premise infrastructure is ownership of the physical environment. Your team has unmediated access to the hardware at any time, with no ticketing process, no access request, and no dependency on a third party's response time.

For organisations with strong in-house IT teams, that direct access translates into faster troubleshooting cycles and the ability to make hardware-level changes without coordinating with anyone outside the organisation.

There is also a psychological dimension to this that matters in regulated environments. Audit committees and boards often find it easier to visualise risk when the infrastructure is physically present and directly controlled.

That said, direct access only delivers value when the team exercising it is adequately staffed, properly trained, and available around the clock. For organisations that don't, and that describes a larger share of Canadian mid-market organisations than most IT leaders are comfortable admitting, that control exists on paper more than in practice.

Power and Cooling Add Up Faster Than Expected

Running servers reliably requires more than plugging them in. A properly configured on-premise environment needs each of the following to function at a baseline level:

- Redundant Power Feeds: At least two independent power circuits per rack to avoid a single point of failure

- UPS Systems: Uninterruptible power supplies to bridge the gap between a utility outage and generator pickup

- Backup Generators: With onsite fuel storage sized for meaningful runtime at full load

- Precision Cooling: Computer room air conditioning units calibrated to the heat load of the environment

- Containment Architecture: Hot/cold aisle separation or raised floor systems to direct airflow efficiently

Each of these is a capital purchase. Each one also carries ongoing maintenance costs. None of it is optional if the environment is housing workloads that cannot tolerate unplanned downtime.

The compounding effect is where most companies smash through budget constraints. A single rack running at a modest 5kW draw, not under max load, can easily consume 25,000–35,000 kWh annually under typical workloads. At commercial electricity rates in Ontario or Alberta, that still translates to $2,500 to $6,000 per year in electricity costs for one rack alone, before adding the cooling overhead (often 30–50% more) needed to remove that heat. If you're pushing systems harder toward max load, these numbers climb significantly higher.

An N+1 cooling configuration, the minimum standard for mission-critical environments, means you are purchasing and maintaining redundant capacity that largely sits idle.

Physical Security and Compliance Certification Costs

If your organisation handles regulated data, financial records, personal health information, payment card data, the physical security requirements attached to that data extend to wherever the servers live. PCI DSS, HIPAA, SOC 2, and provincial health privacy legislation all carry expectations about physical access controls, CCTV retention, visitor management, and environmental monitoring.

Meeting those requirements in an on-premise environment is not a one-time project. It is an ongoing operational commitment that requires documented procedures, internal audits, and periodic third-party assessments.

The certifications most commonly required by Canadian enterprises operating regulated workloads include:

- SOC 2 Type II: Third-party audit of security, availability, and confidentiality controls. Initial audit costs typically range from $30,000 to $100,000 CAD depending on scope, with annual surveillance audits required to maintain status.

- ISO 27001: Information security management certification requiring a formal implementation project, internal audit capability, and annual external surveillance audits. Total first-year costs commonly exceed $50,000 CAD for mid-sized organisations.

- PCI DSS: Payment card data security standard with annual on-site assessments by a Qualified Security Assessor for Level 1 merchants. QSA engagement costs alone can run $40,000 to $100,000 CAD per year.

- HIPAA: While U.S.-originated, HIPAA compliance is often important for Canadian organisations handling U.S. patient data or working with U.S. healthcare partners. It carries no fixed audit cost but requires documented risk assessments, policies, and physical safeguard verification.

- CSAE 3416 / ISAE 3402: Canadian and international equivalents of SOC 1, required by organisations in financial services. Costs are comparable to SOC 2 engagements.

None of these are achieved once and forgotten. Each carries an annual maintenance cost in auditor fees, internal staff time, remediation work, and documentation overhead.

A purpose-built colocation data centre that already holds these certifications distributes those costs across its entire customer base, which is why accessing a certified facility is almost always cheaper than building and maintaining certification in a privately operated environment.

Staffing, On-Call Coverage, and Facilities Management

The staffing cost of on-premise infrastructure is almost always underestimated, because it is rarely tracked as a discrete line item.

When a systems administrator spends four hours troubleshooting a failed CRAC unit, that time is usually absorbed into a general IT bucket. When a facilities manager gets called at midnight because a generator alarm triggered, the cost of that call sits in an HR record, not an infrastructure budget.

Multiply that across a team over 12 months, and the numbers become significant. Managed services attached to a colocation arrangement, remote hands, 24/7 monitoring, and on-site technical support provide an alternative model where specialist expertise is available on demand without requiring your organisation to carry those headcount costs permanently.

Hardware Refresh Cycles and Stranded Capital

Servers have a useful operational life of approximately three to five years. After that, performance degrades relative to current hardware, warranty coverage expires, and support costs increase.

In an on-premise model, the capital tied up in server infrastructure is illiquid and depreciating. When it comes time to refresh, the organisation faces simultaneous capital outlays for new hardware while absorbing the sunk cost of equipment that no longer holds value.

Colocation does not eliminate hardware refresh cycles, you still own the servers, but it decouples the facility investment from the hardware investment. You are not simultaneously trying to refresh servers and upgrade the power delivery infrastructure they sit in.

What Purpose-Built Colocation Facilities Deliver That Others Can't

Not every workload is the same, and the case for colocation is stronger in some contexts than others. These are the scenarios where the infrastructure limitations of on-premise environments become genuinely hard to work around.

High-Density and AI Workloads Outgrow Most On-Prem Environments

Modern GPU clusters used for AI inference and training pull 20kW to 40kW or more per rack. The physical space in most commercial office buildings or even purpose-built server rooms was designed around 3kW to 5kW per rack at most.

Retrofitting a building to support high-density compute requires a complete re-engineering of the power delivery infrastructure, the cooling system, and often the structural floor loading. That is not a project most organisations want to take on, and the timeline to do it properly is measured in months or years.

Purpose-built colocation facilities designed to support high-density AI workloads already have the power density, liquid or precision cooling infrastructure, and electrical distribution architecture in place. The capacity is available today, not at the end of a capital project.

Disaster Recovery Without Building a Second Site

A proper disaster recovery architecture requires geographic separation between a primary site and a recovery site. In an on-premise model, that means either owning or leasing a second physical location, equipping it with redundant infrastructure, and maintaining it continuously.

For most organisations below a certain scale, that is cost-prohibitive, which is why so many DR plans exist on paper but haven't been genuinely validated.

Colocation with a provider that operates multiple facilities across different cities makes geographic DR accessible without the capital commitment of a second owned site. Data can replicate between facilities, and recovery environments can be provisioned in a second market without the organisation having to operate the facility itself.

Carrier-Neutral Connectivity and Network Flexibility

One of the less-discussed advantages of purpose-built colocation is what sits inside the facility beyond the physical infrastructure. Carrier-neutral data centres give customers direct access to multiple network providers from a single location with no single-carrier dependency, no forced bundling, and the ability to negotiate connectivity independently from the facility contract.

For on-premise infrastructures, connectivity is whatever the building's landlord negotiated or whatever a single ISP provides. Redundant network paths require separate contracts, separate physical ingress points, and ongoing management of multiple provider relationships.

Inside a carrier-neutral colocation facility, that diversity is already architected in. Customers can access multiple carriers, connect directly to cloud on-ramps, and establish cross-connects to other organisations in the same facility. This kind of high-availability connectivity is genuinely difficult to replicate in a privately operated environment, and for teams running latency-sensitive or compliance-driven workloads, it is a major upgrade.

Uptime Standards That Most On-Premise Environments Cannot Match

Uptime Institute Tier III certification is not a marketing label. It is a specific technical standard that requires N+1 redundancy across all components, concurrent maintainability, and a design that allows maintenance work to be performed without taking the facility offline.

According to the Uptime Institute's Annual Outage Analysis, 54% of significant outages cost organisations more than $100,000, with nearly one in five exceeding $1 million. Those numbers reflect what happens when infrastructure that was not built to enterprise redundancy standards encounters a real failure event.

Most on-premise server environments, even well-maintained ones, are not built to Tier III standards. They have single points of failure in their power distribution, their cooling, or their network path that are tolerated because building around them is expensive.

A certified colocation facility has eliminated those single points of failure by design, and that design has been independently verified. If uptime is a commercial or regulatory obligation rather than just a preference, the gap between a certified facility and a well-intentioned server room is not a small one.

Colocation Vs On-Premise: Which Should Your Business Choose?

The right infrastructure model depends heavily on what your organisation does, how it handles data, and what your compliance obligations look like. Here is how the decision tends to play out across the verticals where this question comes up most often.

Financial Services

Banks, credit unions, insurance companies, and investment firms operate under some of the strictest data handling requirements in Canada. OSFI B-10, PIPEDA, and provincial securities regulations create a compliance baseline that is expensive to maintain in a privately operated environment.

Add the foreign jurisdiction exposure risk that comes with U.S.-owned providers, and the case for sovereign Canadian colocation becomes difficult to argue against. Most financial institutions that run on-premise today inherited that infrastructure. They did not choose it fresh.

Healthcare

Healthcare organisations sit under PHIPA in Ontario, Alberta's Health Information Act, and equivalent provincial legislation elsewhere, all of which carry explicit requirements around physical access controls, audit trails, and data residency.

On-premise can satisfy those requirements, but doing so means building and maintaining a compliant physical environment indefinitely.

Colocation in a certified facility that already holds the relevant audit documentation is a significantly lighter operational lift, and geographic DR between facilities addresses the business continuity requirements that health regulators increasingly expect to see documented.

Energy and Resources

Energy companies tend to run a mix of operational technology and enterprise IT, with some workloads that genuinely need to stay close to physical infrastructure and others that don't.

The OT side, SCADA systems, process control, and real-time telemetry often stay on-premise for latency and integration reasons. The enterprise side, ERP, financial systems, HR, and collaboration are strong candidates for colocation. The practical answer for most energy organisations is a hybrid model rather than an either/or.

Technology and SaaS

For tech companies and SaaS providers, the decision usually comes down to density and growth trajectory.

Early-stage companies often start in cloud environments for flexibility. As workloads mature and become more predictable, the economics of colocation improve significantly, particularly for compute-heavy applications where cloud egress fees and unpredictable scaling costs start to add up.

Canadian SaaS companies with customers that carry data residency requirements also benefit from being able to point to sovereign Canadian infrastructure.

Government and Public Sector

Government and public sector organisations face some of the most prescriptive data residency requirements of any vertical. Federal and provincial policies increasingly require that sensitive workloads stay on Canadian soil, operated by Canadian entities.

On-premise satisfies that requirement, but creates a facility management burden that most government IT teams are not resourced to handle well. Colocation with a fully sovereign Canadian provider like Qu Data Centres, Canadian-owned, Canadian-operated, no foreign parent, is often the cleaner compliance answer and the more operationally sustainable one.

How Canadian Data Sovereignty Changes the Calculation

For Canadian IT leaders, the colocation versus on-premise decision has a layer that most generic comparisons never address. Data sovereignty is now a material compliance consideration, and it changes how you evaluate any infrastructure provider, including colocation providers.

Data Residency Alone Does Not Equal Sovereignty

The most common misunderstanding is that sovereignty is satisfied by choosing a provider with servers on Canadian soil. Physical location is necessary but not sufficient. What matters equally is the legal jurisdiction governing the entity operating the infrastructure and whether a foreign court could compel data disclosure regardless of where the hardware sits.

The U.S. CLOUD Act is the specific mechanism that creates exposure. Under it, U.S. law enforcement can compel a U.S.-headquartered company to produce data stored anywhere in the world, including in Canada.

As Osler noted in their October 2025 analysis, Ottawa and provincial governments are facing active pressure to protect Canadian data from foreign access under exactly this provision. A U.S.-incorporated or U.S.-owned colocation provider creates a sovereignty gap even when its servers are physically in Canada.

A fully Canadian-owned, Canadian-incorporated provider operating under Canadian jurisdiction eliminates that gap entirely.

Canadian Compliance Frameworks That Affect Your Infrastructure Decision

PIPEDA requires organisations to ensure personal information receives comparable protection when handled by third parties, including an assessment of the legal framework in the receiving jurisdiction.

The Office of the Privacy Commissioner is explicit that accountability follows the data. In 2025, a financial services organisation was penalised C$450,000 for failing to account for CLOUD Act implications in its privacy impact assessment.

OSFI's revised Guideline B-10 requires federally regulated financial institutions to assess foreign jurisdiction access risk for all material third-party arrangements. This is a standard that U.S.-owned colocation providers struggle to satisfy cleanly. Provincial health legislation, including PHIPA and Alberta's Health Information Act, carries equivalent implications for healthcare organisations.

Both on-premise and sovereign Canadian colocation can satisfy these frameworks. What cannot satisfy them is assuming that a Canadian address on a U.S.-owned facility resolves the compliance question.

Why Qu Data Centres Is the Right Colocation Partner for Canadian Enterprises

When the colocation decision comes down to both infrastructure quality and sovereignty, those two requirements usually force a trade-off with most providers. Either the facility is enterprise-grade but U.S.-owned, or the provider is Canadian but doesn't have the capacity or certifications to support regulated enterprise workloads. Qu Data Centres is built to solve exactly that equation.

Qu operates nine purpose-built facilities across five Canadian markets: Calgary, Edmonton, Ottawa, Toronto, and London, Ontario. Four of those facilities hold Uptime Institute Tier III certification.

Every facility carries SOC 1, SOC 2, ISO 27001, PCI DSS, HIPAA, and CSAE/ISAE certifications. Qu is fully Canadian-owned and operated, with 130+ Canadian employees and no foreign entity in the ownership chain, which means no CLOUD Act exposure by design.

From a single cabinet to a multi-megawatt private suite, the deployment options scale to match where your organisation actually is, not where a hyperscaler's pricing model assumes you should be. Qu's connectivity solutions include access to 15+ carrier networks and carrier-neutral interconnection, and our interconnection options support hybrid cloud architectures without locking you into a single provider's ecosystem.

The colocation decision is one of the more consequential infrastructure decisions a Canadian IT leader makes. Make it with a partner who has the right facilities. Book a tour with Qu Data Centres to learn more today.

Frequently Asked Questions About Colocation Vs On-Premise Infrastructure

What Is the Difference Between Colocation and Managed Hosting?

In colocation, you own your hardware and place it in a third-party facility. You manage your own servers; the facility manages the physical environment around them. In managed hosting, the provider owns and operates the hardware on your behalf. The distinction matters for compliance, control, and cost. Colocation gives you more direct control over your environment while still offloading facility overhead.

Is Colocation Cheaper Than On-Premise in Canada?

It depends on scale and what costs you are measuring. When total cost of ownership is calculated across power, cooling, compliance certification, staffing, and hardware refresh cycles over a three-to-five year period, colocation is typically more cost-effective for most mid-market Canadian enterprises. Organisations that already own a recent, well-engineered facility may find the break-even point further out.

Who Owns the Servers in a Colocation Arrangement?

The customer does. Colocation means you place your organisation's hardware, servers, networking equipment, storage, inside a professionally managed facility. The data centre provides the physical environment, power, and connectivity infrastructure. Ownership and management of the hardware remains entirely with the customer.

Can Colocation Facilities Support AI and High-Density Workloads?

Purpose-built colocation facilities engineered for high-density compute can support 20kW to 40kW per rack or more, which is what modern GPU and AI workloads require. Most commercial office environments and older server rooms were designed for 3kW to 5kW per rack. If your organisation is deploying or planning AI infrastructure, the power density limitations of on-premise environments are worth assessing carefully before committing to a deployment model.

What Certifications Should a Colocation Provider Have?

The core certifications to look for are SOC 1, SOC 2, ISO 27001, and PCI DSS at minimum. For regulated industries, HIPAA compliance and CSAE/ISAE certifications are also relevant. Uptime Institute Tier III certification indicates the facility is built and operated to standards that guarantee N+1 redundancy and concurrent maintainability — meaning maintenance work can be carried out without taking the facility offline.

Sources Used for This Article

- Uptime Institute: "Annual Outage Analysis 2024" - uptimeinstitute.com/resources/research-and-reports/annual-outage-analysis-2024

- Osler, Hoskin & Harcourt LLP: "Data sovereignty in light of the CLOUD Act – Back to the future?" - osler.com/en/insights/updates/data-sovereignty-in-light-of-the-cloud-act-back-to-the-future/

- Office of the Privacy Commissioner of Canada: "Guidelines for Processing Personal Data Across Borders" - priv.gc.ca/en/privacy-topics/airports-and-borders/gl_dab_090127/

- Blake, Cassels & Graydon LLP: "OSFI’s New Guideline B-10: Third-Party Risk Management" - blakes.com/insights/osfi-s-new-guideline-b-10-third-party-risk-managem/

- Government of Ontario: "Personal Health Information Protection Act, 2004, S.O. 2004, c. 3, Sched. A" - ontario.ca/laws/statute/04p03

Quick Answer: Colocation and on-premise infrastructure are both models for housing your own physical servers, but they differ significantly in who owns and manages the facility around them.

On-premise means your organisation bears every cost, power, cooling, physical security, compliance, and staffing, within a space you control. Colocation means you keep ownership of your hardware while housing it in a professionally managed, purpose-built data centre that handles the facility infrastructure.

For most Canadian enterprises, the financials favour colocation once the full picture is on the table, but the right answer depends on workload type, compliance requirements, and how your organisation accounts for total cost of ownership.

Key Takeaways

When an organisation builds or expands its server infrastructure, the decision about where those servers live tends to be made too quickly. A rack in the server room feels like the obvious choice. It is physically close, it feels controllable, and the sunk cost of existing hardware makes change seem expensive.

The problem is that most of those instincts don't hold up when you do the math. Power bills compound annually. Cooling systems age and need replacement. Compliance audits start asking harder questions about physical security documentation and fire suppression specifications. On-call coverage gets absorbed by IT staff who should be working on something else.

At the same time, colocation keeps getting more and more popular. It is no longer just a place to put hardware when you run out of room.

Modern colocation facilities are engineered for the power densities that AI and high-performance compute workloads actually require, and the connectivity ecosystems inside those facilities have become infrastructure assets in their own right.

Here’s what you need to know.

What Is Colocation Vs On-Premise Infrastructure?

Before comparing costs and use cases, it helps to be precise about what these two models actually mean. They are often conflated with managed hosting or cloud services, and that confusion leads to the wrong questions being asked.

How On-Premise Server Infrastructure Works

On-premise infrastructure means your organisation owns the servers, owns or leases the physical space they occupy, and bears full operational responsibility for everything around them.

That includes the power delivery systems, the uninterruptible power supplies, the cooling equipment, physical access controls, fire suppression systems, network cabling, and the human beings who maintain all of it. When something fails at 2 AM, a cooling unit, a UPS bank, a power distribution unit, it is your problem to resolve, on your timeline, with your resources.

This model gives organisations maximum direct control. It also means maximum direct exposure to every cost and risk that comes with operating a data centre environment, regardless of the scale.

What Colocation Means For Your Server Architecture

Colocation is frequently misunderstood as a form of outsourced IT. It is not.

In a colocation arrangement, your organisation still owns the physical servers, networking gear, and storage equipment. What you are renting is space inside a professionally managed facility, along with access to that facility's power infrastructure, cooling systems, physical security, fire suppression, and carrier connectivity. The data centre operator keeps the lights on, the temperature stable, and the generators fuelled. Your team manages the hardware that sits inside it.

The difference here matters because it affects how you evaluate the trade-off. Colocation is not giving up control of your environment. It is offloading the facility management that sits around your environment to specialists who do it at scale.

Practical Considerations When Running On-Premise Infrastructure

On-premise infrastructure is not inherently the wrong choice, and for certain organisations it represents a legitimate, well-reasoned decision.

The problem is not the model itself; it’s the responsibility. There are real advantages to running your own environment, but those advantages come with a set of ongoing costs that tend to get underestimated at budget time and felt acutely over the following few years.

Direct Control Over Your Physical Environment

The most defensible argument for on-premise infrastructure is ownership of the physical environment. Your team has unmediated access to the hardware at any time, with no ticketing process, no access request, and no dependency on a third party's response time.

For organisations with strong in-house IT teams, that direct access translates into faster troubleshooting cycles and the ability to make hardware-level changes without coordinating with anyone outside the organisation.

There is also a psychological dimension to this that matters in regulated environments. Audit committees and boards often find it easier to visualise risk when the infrastructure is physically present and directly controlled.

That said, direct access only delivers value when the team exercising it is adequately staffed, properly trained, and available around the clock. For organisations that don't, and that describes a larger share of Canadian mid-market organisations than most IT leaders are comfortable admitting, that control exists on paper more than in practice.

Power and Cooling Add Up Faster Than Expected

Running servers reliably requires more than plugging them in. A properly configured on-premise environment needs each of the following to function at a baseline level:

Each of these is a capital purchase. Each one also carries ongoing maintenance costs. None of it is optional if the environment is housing workloads that cannot tolerate unplanned downtime.

The compounding effect is where most companies smash through budget constraints. A single rack running at a modest 5kW draw, not under max load, can easily consume 25,000–35,000 kWh annually under typical workloads. At commercial electricity rates in Ontario or Alberta, that still translates to $2,500 to $6,000 per year in electricity costs for one rack alone, before adding the cooling overhead (often 30–50% more) needed to remove that heat. If you're pushing systems harder toward max load, these numbers climb significantly higher.

An N+1 cooling configuration, the minimum standard for mission-critical environments, means you are purchasing and maintaining redundant capacity that largely sits idle.

Physical Security and Compliance Certification Costs

If your organisation handles regulated data, financial records, personal health information, payment card data, the physical security requirements attached to that data extend to wherever the servers live. PCI DSS, HIPAA, SOC 2, and provincial health privacy legislation all carry expectations about physical access controls, CCTV retention, visitor management, and environmental monitoring.

Meeting those requirements in an on-premise environment is not a one-time project. It is an ongoing operational commitment that requires documented procedures, internal audits, and periodic third-party assessments.

The certifications most commonly required by Canadian enterprises operating regulated workloads include:

None of these are achieved once and forgotten. Each carries an annual maintenance cost in auditor fees, internal staff time, remediation work, and documentation overhead.

A purpose-built colocation data centre that already holds these certifications distributes those costs across its entire customer base, which is why accessing a certified facility is almost always cheaper than building and maintaining certification in a privately operated environment.

Staffing, On-Call Coverage, and Facilities Management

The staffing cost of on-premise infrastructure is almost always underestimated, because it is rarely tracked as a discrete line item.

When a systems administrator spends four hours troubleshooting a failed CRAC unit, that time is usually absorbed into a general IT bucket. When a facilities manager gets called at midnight because a generator alarm triggered, the cost of that call sits in an HR record, not an infrastructure budget.

Multiply that across a team over 12 months, and the numbers become significant. Managed services attached to a colocation arrangement, remote hands, 24/7 monitoring, and on-site technical support provide an alternative model where specialist expertise is available on demand without requiring your organisation to carry those headcount costs permanently.

Hardware Refresh Cycles and Stranded Capital

Servers have a useful operational life of approximately three to five years. After that, performance degrades relative to current hardware, warranty coverage expires, and support costs increase.

In an on-premise model, the capital tied up in server infrastructure is illiquid and depreciating. When it comes time to refresh, the organisation faces simultaneous capital outlays for new hardware while absorbing the sunk cost of equipment that no longer holds value.

Colocation does not eliminate hardware refresh cycles, you still own the servers, but it decouples the facility investment from the hardware investment. You are not simultaneously trying to refresh servers and upgrade the power delivery infrastructure they sit in.

What Purpose-Built Colocation Facilities Deliver That Others Can't

Not every workload is the same, and the case for colocation is stronger in some contexts than others. These are the scenarios where the infrastructure limitations of on-premise environments become genuinely hard to work around.

High-Density and AI Workloads Outgrow Most On-Prem Environments

Modern GPU clusters used for AI inference and training pull 20kW to 40kW or more per rack. The physical space in most commercial office buildings or even purpose-built server rooms was designed around 3kW to 5kW per rack at most.

Retrofitting a building to support high-density compute requires a complete re-engineering of the power delivery infrastructure, the cooling system, and often the structural floor loading. That is not a project most organisations want to take on, and the timeline to do it properly is measured in months or years.

Purpose-built colocation facilities designed to support high-density AI workloads already have the power density, liquid or precision cooling infrastructure, and electrical distribution architecture in place. The capacity is available today, not at the end of a capital project.

Disaster Recovery Without Building a Second Site

A proper disaster recovery architecture requires geographic separation between a primary site and a recovery site. In an on-premise model, that means either owning or leasing a second physical location, equipping it with redundant infrastructure, and maintaining it continuously.

For most organisations below a certain scale, that is cost-prohibitive, which is why so many DR plans exist on paper but haven't been genuinely validated.

Colocation with a provider that operates multiple facilities across different cities makes geographic DR accessible without the capital commitment of a second owned site. Data can replicate between facilities, and recovery environments can be provisioned in a second market without the organisation having to operate the facility itself.

Carrier-Neutral Connectivity and Network Flexibility

One of the less-discussed advantages of purpose-built colocation is what sits inside the facility beyond the physical infrastructure. Carrier-neutral data centres give customers direct access to multiple network providers from a single location with no single-carrier dependency, no forced bundling, and the ability to negotiate connectivity independently from the facility contract.

For on-premise infrastructures, connectivity is whatever the building's landlord negotiated or whatever a single ISP provides. Redundant network paths require separate contracts, separate physical ingress points, and ongoing management of multiple provider relationships.

Inside a carrier-neutral colocation facility, that diversity is already architected in. Customers can access multiple carriers, connect directly to cloud on-ramps, and establish cross-connects to other organisations in the same facility. This kind of high-availability connectivity is genuinely difficult to replicate in a privately operated environment, and for teams running latency-sensitive or compliance-driven workloads, it is a major upgrade.

Uptime Standards That Most On-Premise Environments Cannot Match

Uptime Institute Tier III certification is not a marketing label. It is a specific technical standard that requires N+1 redundancy across all components, concurrent maintainability, and a design that allows maintenance work to be performed without taking the facility offline.

According to the Uptime Institute's Annual Outage Analysis, 54% of significant outages cost organisations more than $100,000, with nearly one in five exceeding $1 million. Those numbers reflect what happens when infrastructure that was not built to enterprise redundancy standards encounters a real failure event.

Most on-premise server environments, even well-maintained ones, are not built to Tier III standards. They have single points of failure in their power distribution, their cooling, or their network path that are tolerated because building around them is expensive.

A certified colocation facility has eliminated those single points of failure by design, and that design has been independently verified. If uptime is a commercial or regulatory obligation rather than just a preference, the gap between a certified facility and a well-intentioned server room is not a small one.

Colocation Vs On-Premise: Which Should Your Business Choose?

The right infrastructure model depends heavily on what your organisation does, how it handles data, and what your compliance obligations look like. Here is how the decision tends to play out across the verticals where this question comes up most often.

Financial Services

Banks, credit unions, insurance companies, and investment firms operate under some of the strictest data handling requirements in Canada. OSFI B-10, PIPEDA, and provincial securities regulations create a compliance baseline that is expensive to maintain in a privately operated environment.

Add the foreign jurisdiction exposure risk that comes with U.S.-owned providers, and the case for sovereign Canadian colocation becomes difficult to argue against. Most financial institutions that run on-premise today inherited that infrastructure. They did not choose it fresh.

Healthcare

Healthcare organisations sit under PHIPA in Ontario, Alberta's Health Information Act, and equivalent provincial legislation elsewhere, all of which carry explicit requirements around physical access controls, audit trails, and data residency.

On-premise can satisfy those requirements, but doing so means building and maintaining a compliant physical environment indefinitely.

Colocation in a certified facility that already holds the relevant audit documentation is a significantly lighter operational lift, and geographic DR between facilities addresses the business continuity requirements that health regulators increasingly expect to see documented.

Energy and Resources

Energy companies tend to run a mix of operational technology and enterprise IT, with some workloads that genuinely need to stay close to physical infrastructure and others that don't.

The OT side, SCADA systems, process control, and real-time telemetry often stay on-premise for latency and integration reasons. The enterprise side, ERP, financial systems, HR, and collaboration are strong candidates for colocation. The practical answer for most energy organisations is a hybrid model rather than an either/or.

Technology and SaaS

For tech companies and SaaS providers, the decision usually comes down to density and growth trajectory.

Early-stage companies often start in cloud environments for flexibility. As workloads mature and become more predictable, the economics of colocation improve significantly, particularly for compute-heavy applications where cloud egress fees and unpredictable scaling costs start to add up.

Canadian SaaS companies with customers that carry data residency requirements also benefit from being able to point to sovereign Canadian infrastructure.

Government and Public Sector

Government and public sector organisations face some of the most prescriptive data residency requirements of any vertical. Federal and provincial policies increasingly require that sensitive workloads stay on Canadian soil, operated by Canadian entities.

On-premise satisfies that requirement, but creates a facility management burden that most government IT teams are not resourced to handle well. Colocation with a fully sovereign Canadian provider like Qu Data Centres, Canadian-owned, Canadian-operated, no foreign parent, is often the cleaner compliance answer and the more operationally sustainable one.

How Canadian Data Sovereignty Changes the Calculation

For Canadian IT leaders, the colocation versus on-premise decision has a layer that most generic comparisons never address. Data sovereignty is now a material compliance consideration, and it changes how you evaluate any infrastructure provider, including colocation providers.

Data Residency Alone Does Not Equal Sovereignty

The most common misunderstanding is that sovereignty is satisfied by choosing a provider with servers on Canadian soil. Physical location is necessary but not sufficient. What matters equally is the legal jurisdiction governing the entity operating the infrastructure and whether a foreign court could compel data disclosure regardless of where the hardware sits.

The U.S. CLOUD Act is the specific mechanism that creates exposure. Under it, U.S. law enforcement can compel a U.S.-headquartered company to produce data stored anywhere in the world, including in Canada.

As Osler noted in their October 2025 analysis, Ottawa and provincial governments are facing active pressure to protect Canadian data from foreign access under exactly this provision. A U.S.-incorporated or U.S.-owned colocation provider creates a sovereignty gap even when its servers are physically in Canada.

A fully Canadian-owned, Canadian-incorporated provider operating under Canadian jurisdiction eliminates that gap entirely.

Canadian Compliance Frameworks That Affect Your Infrastructure Decision

PIPEDA requires organisations to ensure personal information receives comparable protection when handled by third parties, including an assessment of the legal framework in the receiving jurisdiction.

The Office of the Privacy Commissioner is explicit that accountability follows the data. In 2025, a financial services organisation was penalised C$450,000 for failing to account for CLOUD Act implications in its privacy impact assessment.

OSFI's revised Guideline B-10 requires federally regulated financial institutions to assess foreign jurisdiction access risk for all material third-party arrangements. This is a standard that U.S.-owned colocation providers struggle to satisfy cleanly. Provincial health legislation, including PHIPA and Alberta's Health Information Act, carries equivalent implications for healthcare organisations.

Both on-premise and sovereign Canadian colocation can satisfy these frameworks. What cannot satisfy them is assuming that a Canadian address on a U.S.-owned facility resolves the compliance question.

Why Qu Data Centres Is the Right Colocation Partner for Canadian Enterprises

When the colocation decision comes down to both infrastructure quality and sovereignty, those two requirements usually force a trade-off with most providers. Either the facility is enterprise-grade but U.S.-owned, or the provider is Canadian but doesn't have the capacity or certifications to support regulated enterprise workloads. Qu Data Centres is built to solve exactly that equation.

Qu operates nine purpose-built facilities across five Canadian markets: Calgary, Edmonton, Ottawa, Toronto, and London, Ontario. Four of those facilities hold Uptime Institute Tier III certification.

Every facility carries SOC 1, SOC 2, ISO 27001, PCI DSS, HIPAA, and CSAE/ISAE certifications. Qu is fully Canadian-owned and operated, with 130+ Canadian employees and no foreign entity in the ownership chain, which means no CLOUD Act exposure by design.

From a single cabinet to a multi-megawatt private suite, the deployment options scale to match where your organisation actually is, not where a hyperscaler's pricing model assumes you should be. Qu's connectivity solutions include access to 15+ carrier networks and carrier-neutral interconnection, and our interconnection options support hybrid cloud architectures without locking you into a single provider's ecosystem.

The colocation decision is one of the more consequential infrastructure decisions a Canadian IT leader makes. Make it with a partner who has the right facilities. Book a tour with Qu Data Centres to learn more today.

Frequently Asked Questions About Colocation Vs On-Premise Infrastructure

What Is the Difference Between Colocation and Managed Hosting?

In colocation, you own your hardware and place it in a third-party facility. You manage your own servers; the facility manages the physical environment around them. In managed hosting, the provider owns and operates the hardware on your behalf. The distinction matters for compliance, control, and cost. Colocation gives you more direct control over your environment while still offloading facility overhead.

Is Colocation Cheaper Than On-Premise in Canada?

It depends on scale and what costs you are measuring. When total cost of ownership is calculated across power, cooling, compliance certification, staffing, and hardware refresh cycles over a three-to-five year period, colocation is typically more cost-effective for most mid-market Canadian enterprises. Organisations that already own a recent, well-engineered facility may find the break-even point further out.

Who Owns the Servers in a Colocation Arrangement?

The customer does. Colocation means you place your organisation's hardware, servers, networking equipment, storage, inside a professionally managed facility. The data centre provides the physical environment, power, and connectivity infrastructure. Ownership and management of the hardware remains entirely with the customer.

Can Colocation Facilities Support AI and High-Density Workloads?

Purpose-built colocation facilities engineered for high-density compute can support 20kW to 40kW per rack or more, which is what modern GPU and AI workloads require. Most commercial office environments and older server rooms were designed for 3kW to 5kW per rack. If your organisation is deploying or planning AI infrastructure, the power density limitations of on-premise environments are worth assessing carefully before committing to a deployment model.

What Certifications Should a Colocation Provider Have?

The core certifications to look for are SOC 1, SOC 2, ISO 27001, and PCI DSS at minimum. For regulated industries, HIPAA compliance and CSAE/ISAE certifications are also relevant. Uptime Institute Tier III certification indicates the facility is built and operated to standards that guarantee N+1 redundancy and concurrent maintainability — meaning maintenance work can be carried out without taking the facility offline.

Sources Used for This Article

Paul M

Paul Miedzik is Senior Manager of Marketing at Qu Data Centres, with extensive experience in enterprise cloud and digital infrastructure across the Canadian tech sector.